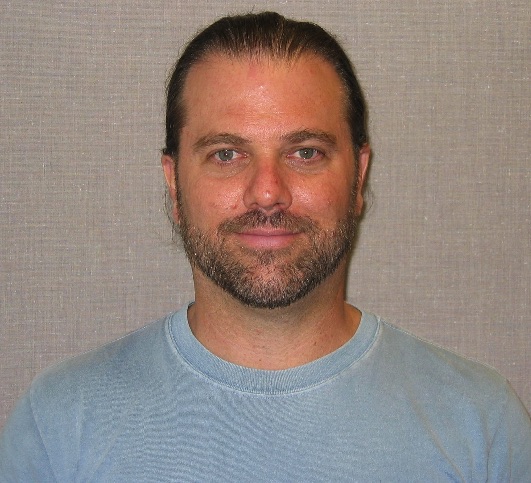

Modular Computing Architecture Costs Energy. Professor Jim Crutchfield and his group will publish an article in Physical Review X on a new, fundamental limit on the energy cost of computing.

In 1961 Landauer famously showed that erasing information requires work, regardless of how it is done. This link between logic and energy has guided the thermodynamics of information processing for decades. However, information processing often happens through the composition of many simple modular components, such as the universal logic gates found in modern computer circuits. Indeed, the interchangeability of modular components allows for easy and flexible design of computations. Despite these benefits, we discover an irretrievable energy cost of modular information processing that comes from broken global correlations. The modularity dissipation reveals that, just like erasure, complex structure itself has energetic consequences.

The modularity dissipation goes beyond Landauer's Principle, though, since it does not result from the state-space compression when transforming logical input to logical output. Rather, modularity dissipation quantifies the thermodynamic efficiency of different implementation architectures of the same net computation. For input-output agents that leverage informational resources in their environment, we show that thermodynamically efficient information engines must store all predictable information about their inputs, requisitely matching the structural complexity of their environment. One positive benefit is that, while modular computations potentially cost more than predicted by Landauer, it is possible to avoid additional modularity dissipation by properly designing an engine’s computation to store global correlations. In this way, the thermodynamics of modularity links structural complexity to energy through the principle of requisite complexity.

Published: June 25, 2018, 5:34 pm